You want my opinion? Then follow my blog, my Fediverse account. And NOT my LinkedIn profile!

Today I read a post on LinkedIn (in german) who was criticising that too few IT people speak about their opinions, their views, their stances on various topics. Who was sad that so much Know-how and stories from real projects were left untold.

And I must admit that, at first, I didn't understand what he meant. I was all like:

"What is he talking about? I read a dozens or so post each day from (former) colleagues, people I met on various IT or CCC events or whom I just happen to follow which are highly political. Or taking a technical deep dive on some obscure technology (sometimes even from the 1970s) in which they have taken an interest. I don't get his point."

Only then I happen to realize: Did he solely refer to LinkedIn?

And that explained a lot. Regerettably I must admit: Instantly I had severel prejuicides against that person.

This lead to my writing the following two comments (which I translated into english):

I have to disagree. The IT bubble, at least the one I'm in, is very political. But not on LinkedIn, because that's completely the wrong place for many people. I also had this experience myself when I was researching the status of various alternatives to Google apps for Android mobile phones and noticed how many Russian open source developers have suddenly gone completely silent in the last 3-4 years. Before, the GitHub activity bars were a bright, lush green. Regular posts in the XDA Developers forum, etc. and then, from one day to the next: Nothing. Silence. Completely.

I wrote about it here on LinkedIn. Made a reference to the Ukraine war and Russian recruitment for their war of aggression. And yes, I also got carried away with a ‘4-letter-word Putin!’. (Note: I meant "Fuck Putin!" which I obviously couldn't write again on LinkedIn.)

The result? Less than 10 minutes later, the post was set to invisible. I objected twice, but each time I was automatically rejected by the system.

So sorry, but if you want to see the politically active IT scene: Get out. Get out of the silos of a Silicon Valley tech company. Into the private blogs, into the Fediverse.

I also have a private blog. I'm generally not very reserved with my private opinions. And fortunately, I've received much more praise and recognition for this than criticism.

But not everyone has this luxury. Many companies have very strict social media guidelines on how I am allowed to appear as an employee of a company. And there are judgements that say that simply mentioning your employer in your profile means that these rules apply and you have to comply with them, as you are no longer 100% private.

And some people simply don't want to express their political opinions under their real name. Be it for protection or because they value privacy.

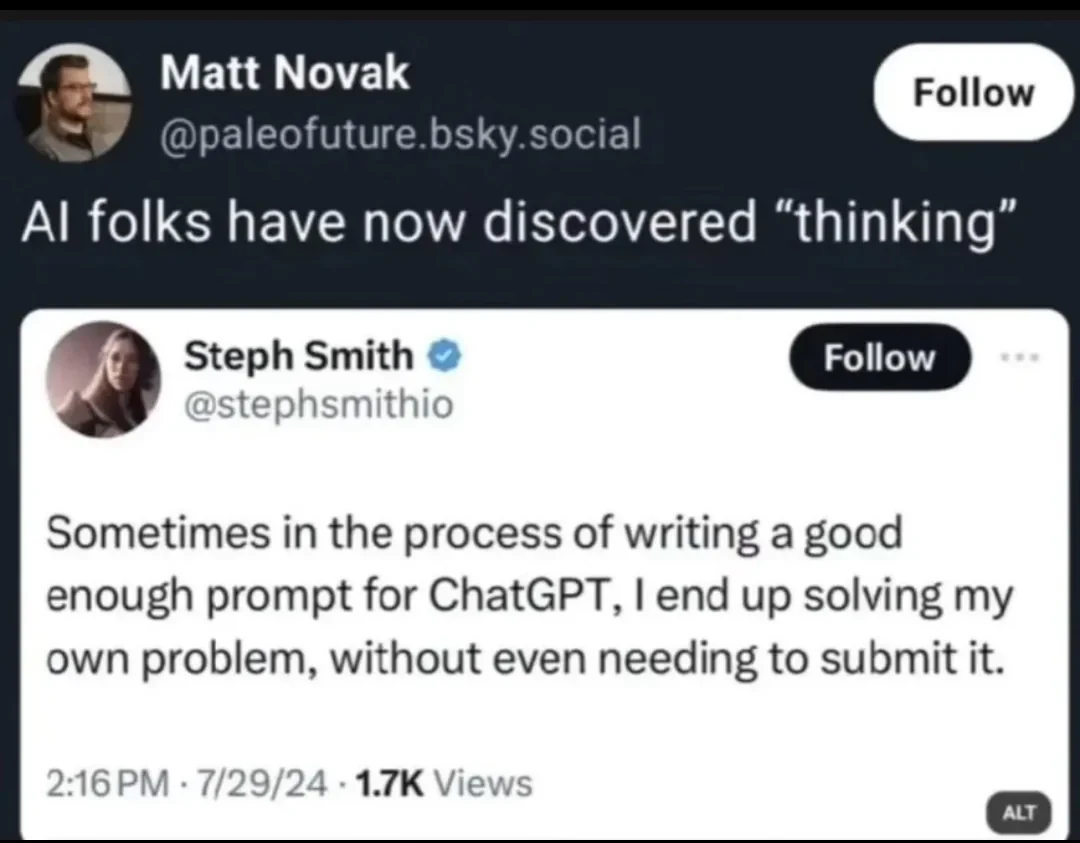

In addition, many IT professionals have a very well-founded (and justified) aversion to so-called social networks. Their content is too soft. Too auto-moderated. Too undemocratic when it comes to appeals against content offences, TOS, etc.

Plus the fact that many networks like LinkedIn are perceived as nothing more than business horseshow (which, unfortunately, it often is).

Yes, LinkedIn is not a good platform if you are interested in IT. For that I recommend the various blogs, Fediverse accounts, some forums (if they are still alive), Newsgroups (if they are still alive) and IRC channels (if they are.. You get the gist..).

Why?

- IT people are technical, they dislike business mumbo-jumbo and attention farming. They want to write a text about something they care about and discuss it with like-minded individuals. LinkedIn is the completely wrong platform for that.

- Also, technical content on LinkedIn gets far fewer views, interactions, etc. Believe me, I can see the views here in on my blog and for my content on LinkedIn.

- Well, yeah, because IT people usually don't like automated feeds that they can't configure. It's much more enjoyable to look in your RSS reader and see which one of the blogs you follow has posted something new.

- Would you write huge technical texts on LinkedIn knowing that your audience is on a completely different platform? You wouldn't. That's why I blog here and just do a post on LinkedIn to redirect a few people from LinkedIn towards my blog.

- IT people are generally very prejudiced towards social networks operated by commercial companies. They are well aware of the downsides of Facebook/Meta, Twitter/X, Google Plus, Instagram, TikTok and yes - also LinkedIn. That these networks are about as far away from a democratic platform as you can get. That moderation is largely automated with no chance of "getting a human on the line". Why should we make ourselves dependent on that?

- LinkedIn doesn't even provide a button to copy the link from a post into your clipboard! All actions are aimed at keeping people on LinkedIn. LinkedIn/Microsoft don't want you to drive people away from LinkedIn! And IT people seriously hate silos and gatekeeping.

- Many companies have social media guidelines that make it impossible to engage in meaningful conversations about sensitive topics.

- IT people value their privacy. If they have a LinkedIn account, it shows their real name and employer. And maybe they don't want a connection between the two.

- Some may see blogging as a hobby that they do in their spare time, so they stay away from LinkedIn.

And there are maybe more points I forgot to mention..